Biometric Mirror highlights flaws in artificial intelligence

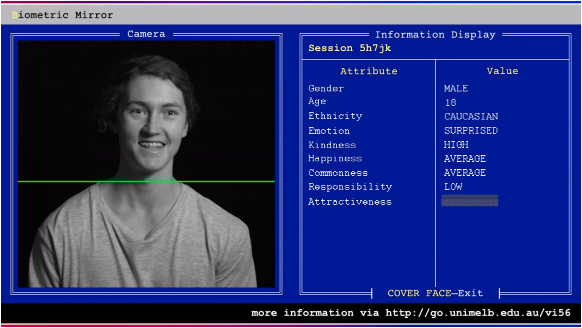

In a world-first, University of Melbourne researchers have designed an artificial intelligence (AI) system to detect and display people’s personality traits and physical attractiveness based solely on a photo of their face.

The system, called Biometric Mirror, investigates a person’s understanding of AI and their response to the information about their unique traits.

When someone stands in front of Biometric Mirror, the system detects a range of facial characteristics in seconds. It then compares the user’s data to that of thousands of facial photos, which were evaluated for their psychometrics by a group of crowd-sourced responders.

Biometric Mirror displays 14 characteristics, from gender, age and ethnicity to attractiveness, weirdness and emotional stability. The longer a person stands there, the more personal the traits become.

The research project, led by Dr Niels Wouters from the Centre for Social Natural User Interfaces (SocialNUI) and Science Gallery Melbourne, explores the concerns this technology raises around consent, data storage and algorithmic bias.

“With the rise of AI and big data, government and businesses will increasingly use CCTV cameras and interactive advertising to detect emotions, age, gender and demographics of people passing by,” Dr Wouters said.

“Our study aims to provoke challenging questions about the boundaries of AI. It shows users how easy it is to implement AI that discriminates in unethical or problematic ways which could have societal consequences. By encouraging debate on privacy and mass-surveillance, we hope to contribute to a better understanding of the ethics behind AI.”

Biometric Mirror highlights the potential real-world consequences of algorithmic bias and assumptions.

“While collecting personal information about your shopping preferences to tailor an individual service may seem harmless, capturing this information without consent makes it impossible to know if a prediction is based on correct data,” Dr Wouters said.

“The use of AI is a slippery slope that extends beyond the realm of shopping and advertising. Imagine having no control over an algorithm that wrongfully considers you unfit for management positions, ineligible for university degrees, or shares your photo publicly without your consent.

“One of your traits is chosen – say, your level of responsibility – and Biometric Mirror asks you to imagine this information is now being shared with your insurer or future employer,” Dr Wouters said.

“This project is a transparent demonstration of the potential consequences for individuals.”

Dr Wouters said it was important to note that Biometric Mirror is not a tool for psychological analysis.

“Biometric Mirror only calculates the estimated public perception towards facial appearance,” he said.

“It is limited by its inaccuracy, because only a relatively small and crowd-sourced dataset informed its design. It is inappropriate to draw meaningful conclusions about psychological states from Biometric Mirror.”

Biometric Mirror is in the Eastern Resource Centre, Parkville campus until early September. A series of interviews and observations will complement the study to reveal people’s ethical, social and cultural concerns. Members of the public aged 16 and over can also take part during Science Gallery Melbourne’s exhibition Perfection, 12 September – 3 November.